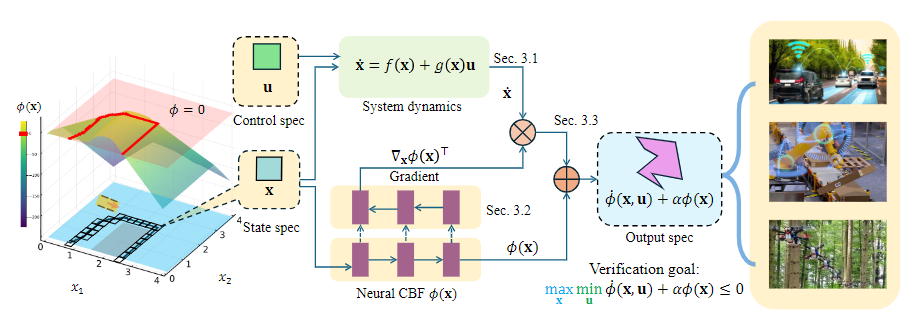

Novel verification framework for neural CBFs enhancing safety and efficiency in robotics and AI safety. High impact.

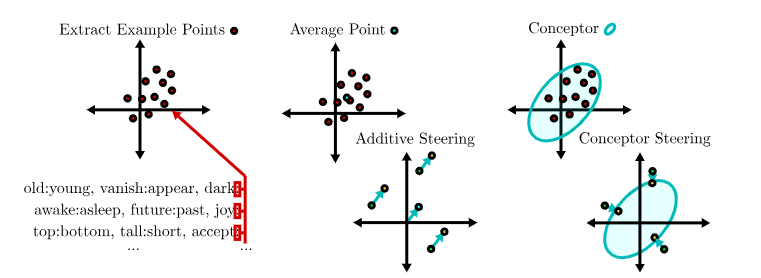

Introduces 'conceptors' for precise LLM output control, advancing LLM control and safety. High impact.

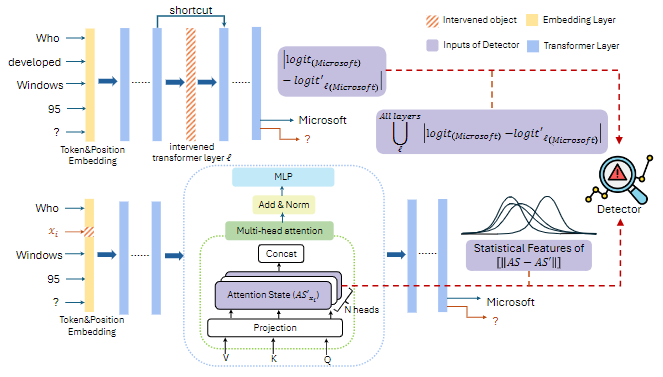

Novel causal analysis for LLM safety, detecting various misbehaviors. Comprehensive solution to LLM safety concerns.

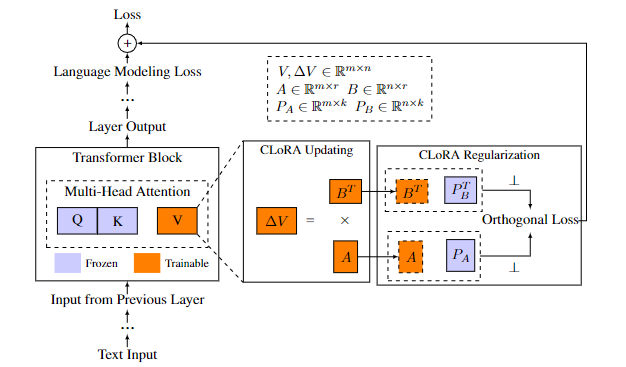

Addresses catastrophic forgetting in LLMs. CLoRA improves performance on new and old tasks, impacting LLM training.

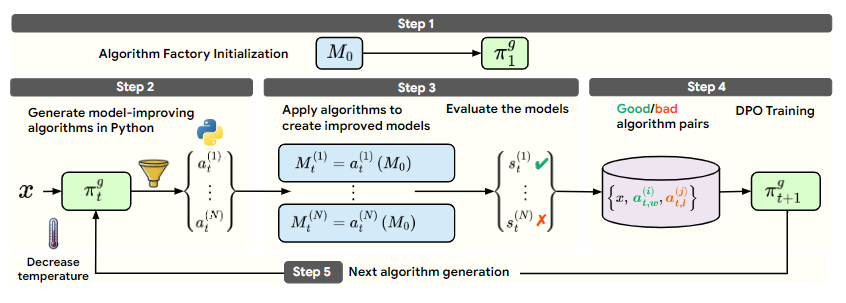

Groundbreaking self-developing framework lets LLMs create self-improvement algorithms, showcasing potential for autonomous AI.