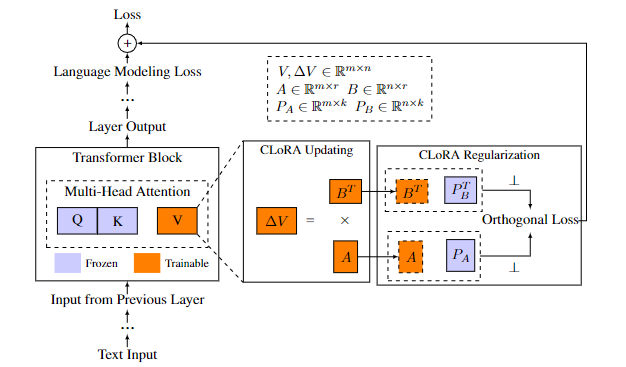

Addresses catastrophic forgetting in LLMs. CLoRA improves performance on new and old tasks, impacting LLM training.

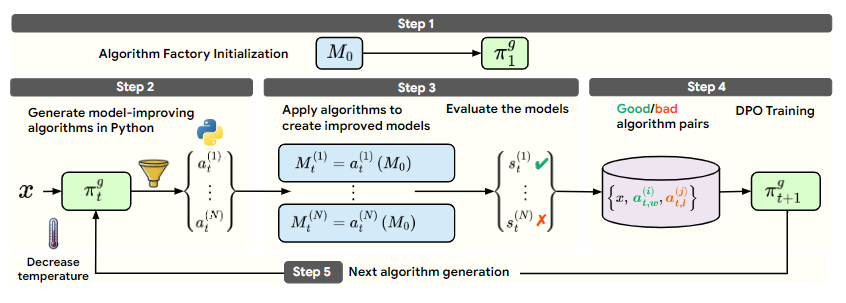

Groundbreaking self-developing framework lets LLMs create self-improvement algorithms, showcasing potential for autonomous AI.

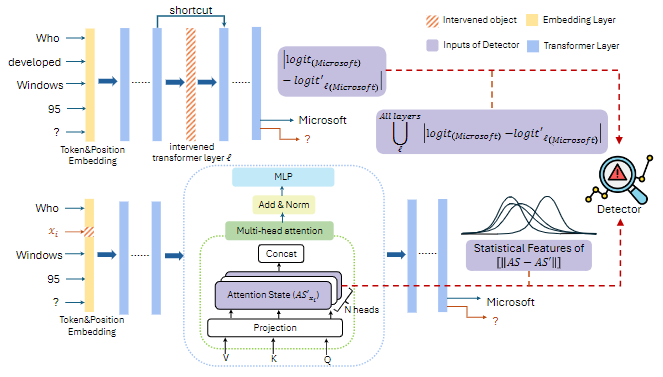

Novel causal analysis for LLM safety, detecting various misbehaviors. Comprehensive solution to LLM safety concerns.

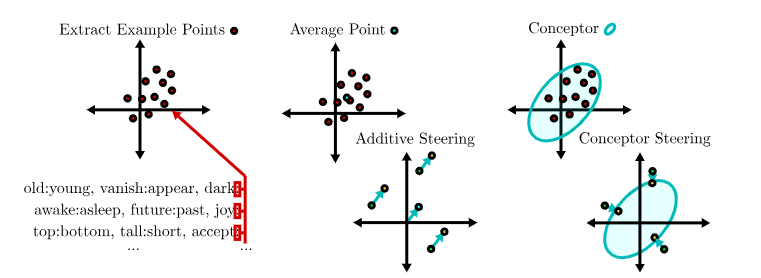

Introduces 'conceptors' for precise LLM output control, advancing LLM control and safety. High impact.

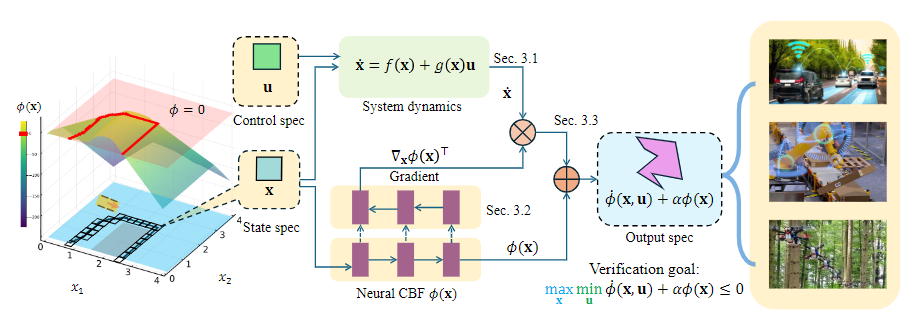

Novel verification framework for neural CBFs enhancing safety and efficiency in robotics and AI safety. High impact.

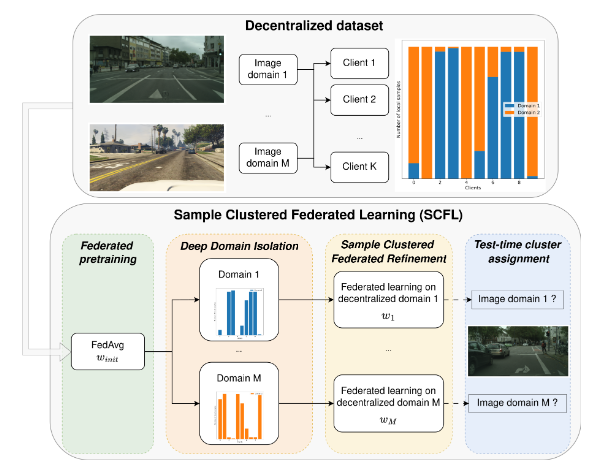

Introduces Deep Domain Isolation and Sample Clustered Federated Learning for improved performance in Non-IID settings.

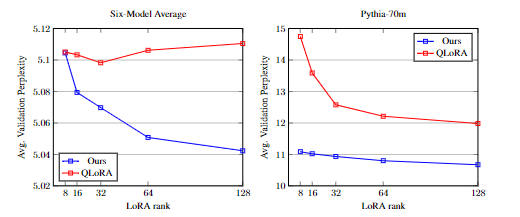

Introduces a quantization-aware initialization for LoRA to mitigate quantization errors and improve model performance.

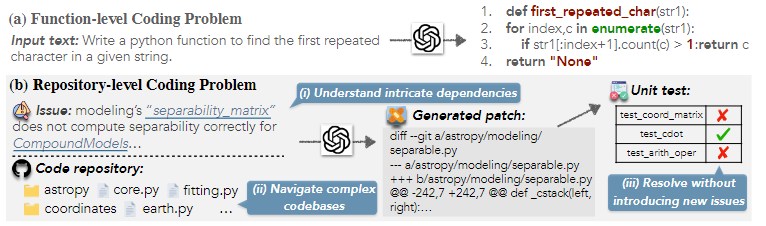

Enhances AI software engineering by introducing RepoGraph, a plug-in module for repository-level code understanding.

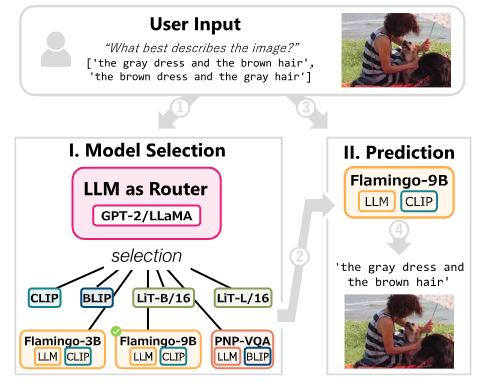

Challenges the conventional wisdom on VLMs and LLMs in image classification, proposing a lightweight fix for improved efficiency.

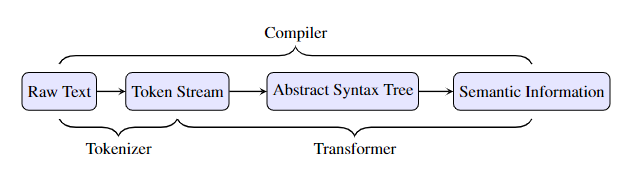

Provides a formal investigation of transformers as compilers, showing logarithmic parameter scaling with input length.

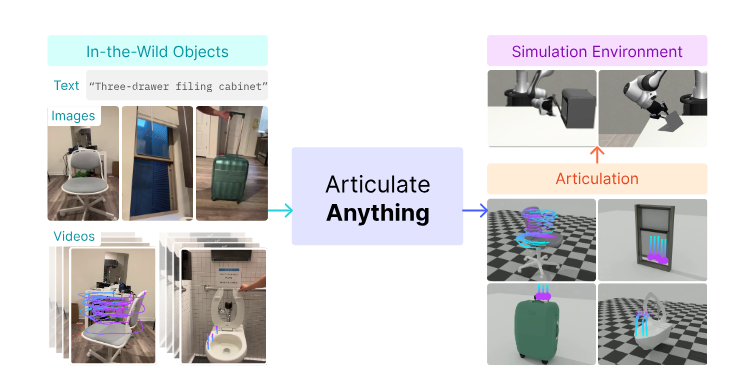

Automates creation of interactive 3D objects; uses vision-language models; state-of-the-art performance.

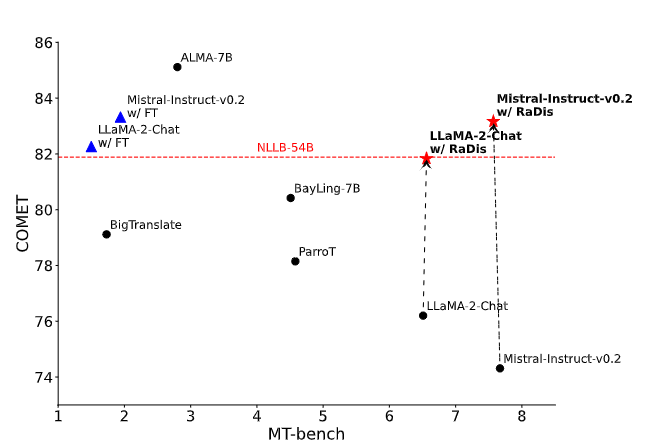

Novel approach to improve translation without losing general abilities; uses rationales; enhances translation performance.

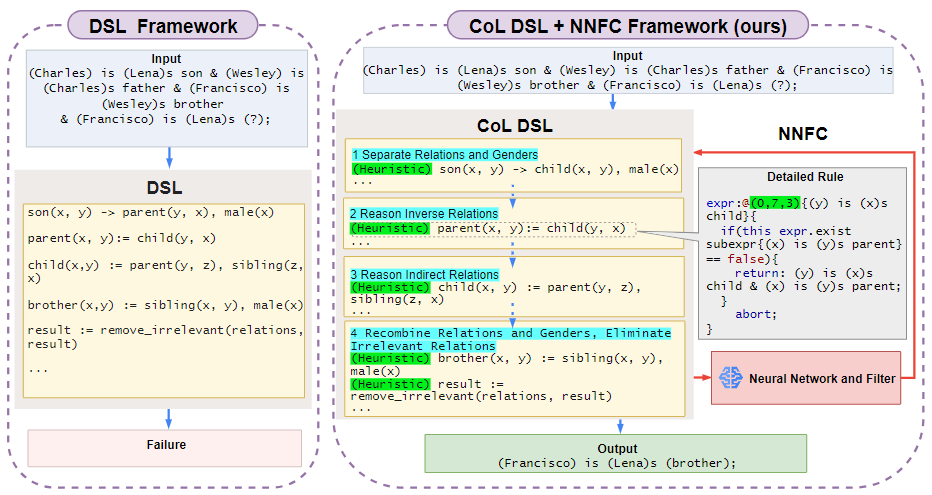

Novel program synthesis approach; improves efficiency and reliability; addresses limitations of existing methods.

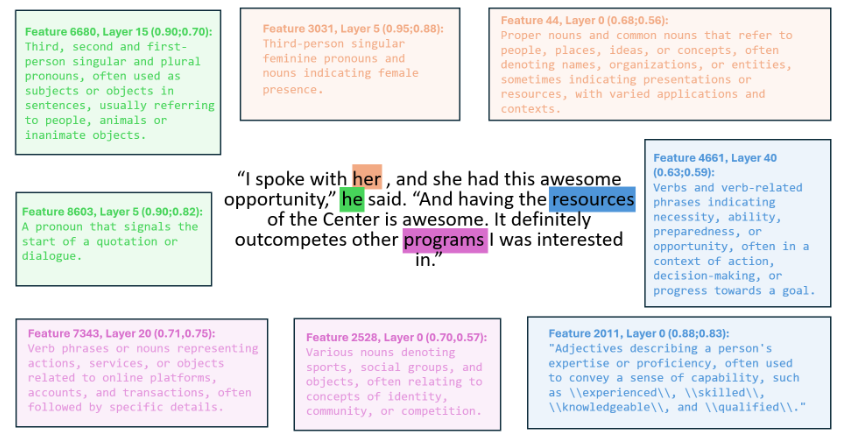

Automated pipeline for interpreting LLM features; uses sparse autoencoders and LLMs; novel explanation scoring techniques.

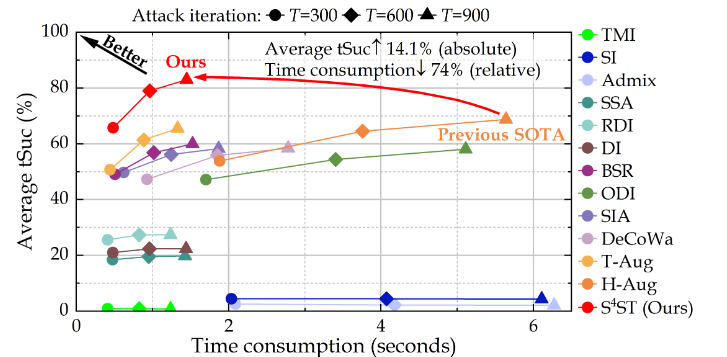

Highly efficient transferable targeted attacks; addresses gradient vanishing; state-of-the-art performance.