Large language models (LLMs) are transforming AI, but controlling their output remains a challenge. While methods like RLHF and fine-tuning exist, they're computationally expensive and may not generalize well. Prompt engineering, while simpler, often yields inconsistent results. Activation engineering offers a promising alternative: directly tweaking the model's activations during inference to steer its output. But traditional methods, relying on single "steering vectors," often fall short, especially on complex tasks.

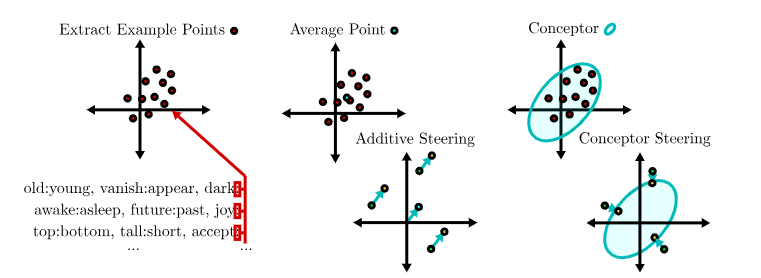

This blog post dives into a research paper, "Steering Large Language Models using Conceptors: Improving Addition-Based Activation Engineering," which introduces a novel approach: using conceptors to achieve more precise LLM control. Conceptors are mathematical constructs representing sets of activation vectors as ellipsoidal regions. Think of them as sophisticated, soft projection matrices offering far more nuanced control than simple steering vectors.

The core idea is simple yet powerful: instead of a single vector, conceptors capture the distribution of activation patterns associated with a specific task. This richer representation allows for more robust and accurate steering. The authors hypothesize, and demonstrate, that:

The researchers tested their conceptor approach against traditional additive steering vectors using two LLMs: GPT-J 6B and GPT-NeoX 20B. They used a dataset of input-output function examples (antonyms, tense changes, translation, singular-plural, country-capital, capitalization) from prior work.

For each function, they:

The results were clear and consistent: conceptor-based steering significantly outperformed additive steering across all functions and LLMs. This advantage was especially pronounced for complex tasks. Combining conceptors using Boolean operations also trumped averaging steering vectors for multiple goals. While mean-centering boosted additive steering, conceptors still maintained a clear lead. The optimal layers for steering were also identified for both models and tasks.

While promising, the study acknowledges limitations:

Future research should address these limitations and explore:

This research strongly suggests that conceptor-based steering offers a significant improvement over traditional additive methods for controlling LLMs. The ability to capture and manipulate the distribution of activation patterns opens up exciting possibilities for more precise and robust LLM control, paving the way for more reliable and safer AI systems. The increased computational cost is a hurdle, but the potential benefits warrant further investigation.